How to Use LLMs to Enhance Data Extraction From Unstructured Text

How combining LLMs with schema validation solves the extraction problem that NLP never could

🇩🇪 Before starting this article, let me remind you that on Friday the 15th, there will be the first TWSC meetup in Munich. For more details and to confirm your attendance, go to the event page 🇩🇪

The web contains an extraordinary volume of information, the majority of which is in textual form. Blogs, forums, and newsletters alone generate millions of words of domain-specific knowledge every week. And they’re not the only sources of text on the web.

When you want to get insights from that kind of data, successfully extracting it from the web is only half of the battle, even now that LLMs can use vision to scrape complex visual layouts. The second part of the challenge is structuring this data to get it ready for analytics. Why? Because when you point a scraper at a news article, you get back a wall of text. But you cannot query it. You cannot aggregate it. You cannot feed it reliably into a machine learning pipeline or a database without significant preprocessing.

This article addresses the preprocessing problem of unstructured text when you scrape it from the web. It traces the evolution of solutions from classical NLP to large language models, identifies where each approach breaks down, and proposes a practical architectural solution.

Let’s get into it!

Before proceeding, let me thank NetNut, the platinum partner of the month. They have prepared a juicy offer for you: up to 1 TB of web unblocker for free.

What “Unstructured” Really Means in Practice

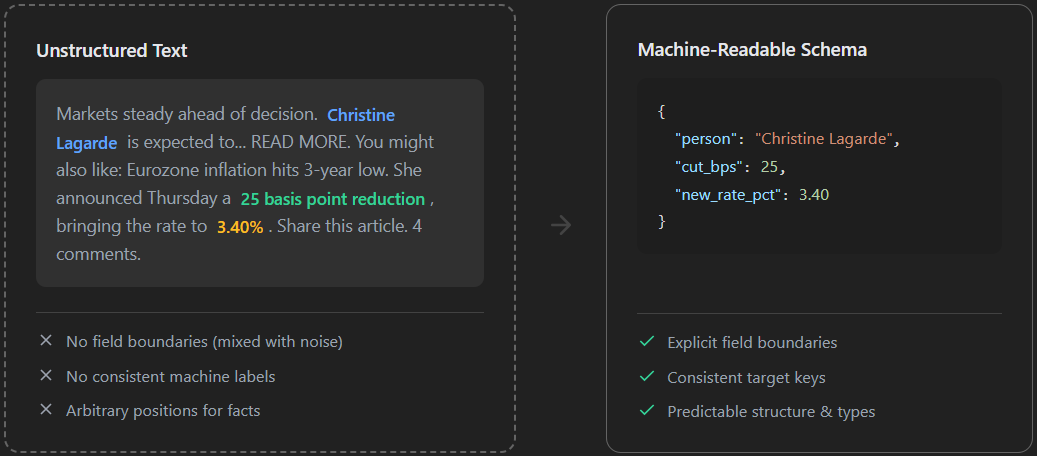

Unstructured text refers to content that carries no machine-readable schema. The information exists in the data you retrieved from the web, but no field boundaries exist, no consistent labels, and no guaranteed position for any given fact.

The following schema represents the difference between unstructured and structured text (machine-readable schema):

Let’s consider three concrete scraping targets to illustrate what this costs you in practice.

For your scraping needs, having a reliable proxy provider like Decodo on your side improves the chances of success.

News Articles: When Signals Are Buried in Noise

Consider you scraped a Reuters article about an ECB rate decision. The text you get back from the scraper could be something as follows:

European Central Bank decides on rates.

Listen to this article. 2 min audio.

You might also like: Eurozone inflation hits 3-year low.

Christine Lagarde announced Thursday a 25 basis point reduction, bringing the main

refinancing rate to 3.40%.

SPONSORED: Track macro events with Bloomberg Terminal.

The decision was widely anticipated after last month's CPI print. Share this article.

4 comments. John M. writes: this was priced in alreadyYour raw text contains the article body, a teaser for a related story, a sponsored insertion, and reader comments. The fact you want is buried in there: the ECB cut its main refinancing rate to 3.40% on a specific date. But your extractor gets the full content.

Such a wall of text, which, generally speaking, is way bigger than this and is useless for analytics purposes without preprocessing.

Financial Newsletters: When “Just Under Two Percent” Breaks Your Aggregation

Suppose you scrape a financial newsletter to extract an updated macroeconomic forecast. You need to capture a specific fact. Something like “Goldman Sachs revised its 2026 US GDP growth forecast down to 1.8%”. Your scraper captures the entire page output, which is similar to an article. Similarly to the previous example, the resulting raw text mixes the core facts with boilerplate and unrelated news:

Market Daily Newsletter. November 12.

Jan Hatzius (Goldman Sachs) and his team were out with a note early Tuesday.

SPONSORED: Get 50% off your trading fees today.

They see tariffs shaving roughly 0.7 points off the baseline. Meanwhile,

European markets rallied on ECB news.

Read our full coverage of the Eurozone here.

The revised number now sits just under two percent for the full year.

Subscribe for premium insights.The text distributes the target fact across the entire document. Also, the wording “just under two percent” requires numerical understanding to say that the text refers to the actual number you were searching for, that is, an exact 1.8%.

Now, imagine generalizing this after scraping hundreds of financial news and newsletters to regroup the information to summarize the numbers. Getting insight would be impossible. Why? Because some sources will give you the actual information you want (growth forecast down to 1.8%), others will use different phrasing to define the trend (”An expected growth under 2 percent”, “a slightly shrinking trend”, etc).

Without a way to create a structure for such data, you can’t get any insights from it.

Job Posting Offers: They Are Always Messier Than They Look

Consider the case when you want to scrape job offers to get an idea of what the market is paying on average for a specific position, given the expected technical skills, and considering the same day-to-day activity. Job offers can have the following ambiguities:

A sentence might read “3+ years of experience with Python”. This establishes a floor and ignores a ceiling. Alternatively, the text might read “Senior-level candidates only”. This uses qualitative seniority as a proxy for an exact quantitative number.

Salary breaks in a different direction. One posting can say “$120,000 - $145,000 base”. Another can be “competitive compensation commensurate with experience”. A third could be“€100,000”, which you need to convert to dollars to make an actual comparison.

Employment type can introduce further ambiguity and difficulties. “Full-time”, “FTE”, “permanent”, and “direct hire” basically mean the same thing but are written differently. Also, the text might specify the role is “Hybrid”, which means multiple different things across companies. It could mean two days in the office. It could mean occasional travel with headquarters-optional rules.

When sites get tough, skip the heavy lifting. Get clean, structured CSV datasets, ready for Excel, BI or your apps

How Classical NLP Tried to Solve This (and Where It Stopped)

Before large language models were released, the standard answer to this problem was Natural Language Processing. The classical NLP toolkit gave developers a set of tools that could, with enough effort, extract meaningful structure from text using different, but often interconnected, processes like the following:

Named Entity Recognition (NER): NER is a process used in NLP to extract entities from text corpora. It can particularly identify spans of text as persons, organizations, locations, or dates. An NLP model trained on news corpora, for example, is able to scan an article and tag “Jane Doe” as a person and “Washington D.C.” as a geopolitical entity.

Part-of-speech tagging: Is a process in which NLP models can identify nouns, verbs, and adjectives. This enables the downstream logic to focus on the right parts of a sentence.

Dependency parsing: Maps grammatical relationships between words, helping to extract which subject performed which action on which object.

Relation extraction: Identifies when two co-occurring entities have a specific relationship. For example, a person who was affiliated with an organization, or an event that occurred in a specific location.

Libraries like spaCy, Stanford NLP, and NLTK made these processes largely accessible. But they work well for well-defined, narrow tasks on consistent text domains. The problems and limitations of this solution appear quickly at the edges:

Domain shift breaks everything: A NER model trained on news articles performs poorly on scientific abstracts. A model tuned for English financial text fails on multilingual content. In other words, every new domain requires retraining, re-labeling, and re-evaluation. These processes are very costly, both in terms of money and time.

Context is invisible: Classical NLP models operate at the token and sentence level. They have no mechanism for understanding that “Apple” in a technology article refers to a corporation, while “apple” in a nutrition blog refers to a fruit. Disambiguation requires hand-crafted rules or separate classification layers bolted on top (which, again, is costly).

Before NLP, you could basically only use regex (with all the difficulties associated with manually cleaning data, standardizing it, and…using regex!). So, NLP was a genuine (big) step forward: it made large-scale text analysis possible in ways that pure pattern matching never could (which is a way to find patterns in scraped data using AI). But it still required substantial domain expertise, constant maintenance, and produced results that were narrow, fragile, and difficult to generalize.

The Modern Solution: LLMs as Universal Structure Extractors

Large language models fundamentally changed the extraction problem. On the side of the underlying technology, a classical NLP model learns the statistical patterns inside the text. An LLM, instead, learns to understand language. This distinction matters enormously because it opened the doors to the following:

Context disambiguation that works out of the box: Feed an LLM with a paragraph from a technology article containing the word “Apple” and it will correctly identify it as a company. Feed it with a paragraph from a recipe blog, and it will correctly identify it as a fruit. No separate disambiguation layer. The model resolves ambiguity the same way a human reader does: by reading the surrounding context.

Semantic equivalence that is understood, not computed: An LLM knows that “$40,” “forty dollars,” “40 USD,” and “forty bucks” all express the same value. You don’t need to instruct it to understand that.

Implicit information that becomes accessible: A sentence like “the study, conducted over three months at a Boston hospital, found no significant effect” contains a location, a duration, and a finding. An LLM can extract all three without requiring the text to follow any particular structure.

Domain generalization that requires no retraining: The same LLM that extracts entities from political news articles can extract findings from scientific abstracts, event mentions from cultural journalism, and source attributions from investigative reporting. You just need to change the prompt, not the model.

The practical workflow becomes straightforward:

You scrape unstructured text from the web.

You pass the content to an LLM with a prompt that describes what you want to extract.

The model returns a response.

You use that response downstream.

This process works. But using LLMs alone introduces a different class of problems:

Output format is not guaranteed: Ask an LLM to return a price, and it might return $40 in one run,

40dollars in another, and 40 USD in a third. The model understands the value when it retrieves it from scraped content. But it does not guarantee how it expresses that value unless you explicitly constrain it.Required fields can go missing: If the article you extracted the content from does not mention a publication date, the model might omit the field, return null, or return

"not mentioned", or invent a plausible date (which is way worse). Each behavior is different, and none of them is predictable without enforcement.Hallucination is a real risk: When the model is uncertain, it always generates a plausible answer. For extraction tasks, that means it can invent entity names, fabricate statistics, or fill in missing information with confident-sounding fiction. Without validation, these errors pass into your data, creating issues at the analytics level.

Generalizing all of this, you also get scalability issues because you have no consistency guaranteed. A pipeline processing 10,000 articles requires every output to follow the same schema. But a model that returns slightly different structures across runs cannot feed a database reliably without significant error handling.

In other words, LLMs provide you with the understanding that NLP lacked. But they do not, on their own, provide the structural guarantees that production pipelines require.

How to Get Semantic Power and Structural Guarantees at the Same Time: A Practical Approach

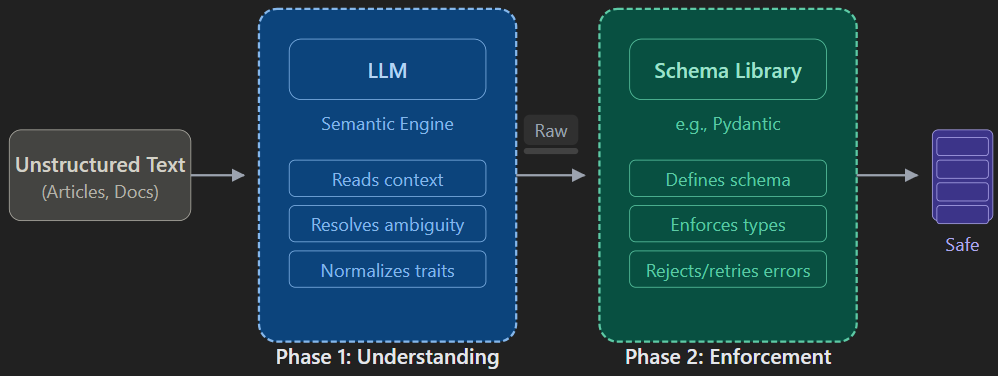

One possible solution to the unpredictability of LLM outputs is to separate the two concerns that these models conflate: semantic understanding and structure enforcement.

To do so, you can:

Use the LLM for what it does well: reading text, resolving ambiguity, extracting meaning, and normalizing inconsistent expressions.

Use specific libraries to define schemas, enforce types, validate outputs, and reject malformed data before it enters your pipeline.

Below is how this solution works, at a high level:

Let’s see how to implement this process and how the two approaches differ in practice.

The Baseline Approach: A Direct LLM Call (and What It Gives You)

Consider the following content that can come from scraping a news article:

Fed Signals Caution as Inflation Data Disappoints

By Sarah M. Connelly | April 14, 2026 | Economics & Policy

The Federal Reserve signaled on Monday that it remains in no rush to cut interest rates,

after fresh inflation data showed consumer prices rose more than expected last month.

Jerome Powell, speaking at a conference in Washington, said the central bank needs

"greater confidence" that inflation is moving sustainably toward its two-percent target

before reducing borrowing costs.

The Consumer Price Index climbed 3.5 percent in March, up from 3.2 percent in February,

according to the Labor Department. Economists polled by Reuters had forecast a reading

of 3.3 percent. Core CPI, which strips out food and energy, rose 3.8 percent year-over-year.

Markets reacted sharply. The S&P 500 fell nearly one point five percent by midday,

while the yield on the 10-year Treasury note jumped to four point six percent.

Goldman Sachs revised its forecast, pushing back its expected first rate cut from June

to September. JPMorgan analysts said two cuts in 2026 now look "optimistic."

Powell emphasized that the Fed is not considering rate hikes at this stage, but stressed

that the path back to two percent inflation "may take longer than previously thought."

The next Fed meeting is scheduled for the first week of MayTo directly pass it to a GPT model, asking it for a precise output, you can use the following code:

import os

import json

from openai import OpenAI

# Scraped content

SCRAPED_TEXT = """

Fed Signals Caution as Inflation Data Disappoints

By Sarah M. Connelly | April 14, 2026 | Economics & Policy

The Federal Reserve signaled on Monday that it remains in no rush to cut interest rates,

after fresh inflation data showed consumer prices rose more than expected last month.

Jerome Powell, speaking at a conference in Washington, said the central bank needs

"greater confidence" that inflation is moving sustainably toward its two-percent target

before reducing borrowing costs.

The Consumer Price Index climbed 3.5 percent in March, up from 3.2 percent in February,

according to the Labor Department. Economists polled by Reuters had forecast a reading

of 3.3 percent. Core CPI, which strips out food and energy, rose 3.8 percent year-over-year.

Markets reacted sharply. The S&P 500 fell nearly one point five percent by midday,

while the yield on the 10-year Treasury note jumped to four point six percent.

Goldman Sachs revised its forecast, pushing back its expected first rate cut from June

to September. JPMorgan analysts said two cuts in 2026 now look "optimistic."

Powell emphasized that the Fed is not considering rate hikes at this stage, but stressed

that the path back to two percent inflation "may take longer than previously thought."

The next Fed meeting is scheduled for the first week of May.

"""

# Define LLM client

raw_client = OpenAI(api_key=os.environ.get("YOUR_OPENAI_API_KEY"))

# Define prompt for the LLM

raw_prompt = """

Extract the following information from the article below and return it as JSON:

- title

- author

- publication_date

- mentioned_organizations

- cpi_march_value

- key_claim

- market_sentiment

Article:

""" + SCRAPED_TEXT

# Get response from LLM

raw_response = raw_client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": raw_prompt}]

)

raw_output = raw_response.choices[0].message.content

# Print results

print(raw_output)The result will be as follows:

{

"title": "Fed Signals Caution as Inflation Data Disappoints",

"author": "Sarah M. Connelly",

"publication_date": "April 14, 2026",

"mentioned_organizations": [

"Federal Reserve",

"Labor Department",

"Reuters",

"Goldman Sachs",

"JPMorgan"

],

"cpi_march_value": "3.5 percent",

"key_claim": "The Federal Reserve is in no rush to cut interest rates and needs greater confidence that inflation is moving sustainably toward its two-percent target before reducing borrowing costs.",

"market_sentiment": "Negative"

}Now, at first sight, this seems good. The prompt asked the GPT model to create a JSON file with specific values, and the model was able to do so. But two major problems affect the next steps when analyzing this data. They are:

The publication date is reported as “April 14, 2026”. This is not represented in ISO 8601 format and will break any date parser.

The CPI is reported as “3.5 percent”, which is a string. Not a number or a float, which is what is required for such data if you want to further analyze it (without any intermediate steps).

So, the LLM was able to give structure to an unstructured text, after being specifically prompted to do so. But it failed at providing the data in the right format. To do so, you have to provide specific guidance to the model.

What Changes When You Define The Schema

To have guarantees on the output format, you can use the following code:

import os

import json

import instructor

from openai import OpenAI

from pydantic import BaseModel, Field

from typing import Optional, Literal

# Scraped content

SCRAPED_TEXT = """

Fed Signals Caution as Inflation Data Disappoints

By Sarah M. Connelly | April 14, 2026 | Economics & Policy

The Federal Reserve signaled on Monday that it remains in no rush to cut interest rates,

after fresh inflation data showed consumer prices rose more than expected last month.

Jerome Powell, speaking at a conference in Washington, said the central bank needs

"greater confidence" that inflation is moving sustainably toward its two-percent target

before reducing borrowing costs.

The Consumer Price Index climbed 3.5 percent in March, up from 3.2 percent in February,

according to the Labor Department. Economists polled by Reuters had forecast a reading

of 3.3 percent. Core CPI, which strips out food and energy, rose 3.8 percent year-over-year.

Markets reacted sharply. The S&P 500 fell nearly one point five percent by midday,

while the yield on the 10-year Treasury note jumped to four point six percent.

Goldman Sachs revised its forecast, pushing back its expected first rate cut from June

to September. JPMorgan analysts said two cuts in 2026 now look "optimistic."

Powell emphasized that the Fed is not considering rate hikes at this stage, but stressed

that the path back to two percent inflation "may take longer than previously thought."

The next Fed meeting is scheduled for the first week of May.

"""

# Validation schema

class ArticleExtraction(BaseModel):

title: str = Field(description="The article's headline")

author: Optional[str] = Field(description="Full name of the author if explicitly mentioned")

publication_date: Optional[str] = Field(description="Publication date in ISO 8601 format (YYYY-MM-DD)")

mentioned_organizations: list[str] = Field(description="All organizations referenced in the article")

cpi_march_value: Optional[float] = Field(description="CPI value as a float (e.g. 3.5)")

key_claim: str = Field(description="The central argument or finding of the article in one sentence")

market_sentiment: Literal["positive", "negative", "neutral"] = Field(

description="Overall market sentiment expressed in the article"

)

structured_client = instructor.from_openai(OpenAI(api_key=os.environ.get("YOUR_OPENAI_API_KEY")))

extraction = structured_client.chat.completions.create(

model="gpt-4o",

response_model=ArticleExtraction,

messages=[

{

"role": "user",

"content": f"Extract structured information from the following article:\\n\\n{SCRAPED_TEXT}"

}

]

)

print(extraction.model_dump_json(indent=2))

print("\\n" + "=" * 60)

print("INSPECTION OUTPUT")

print("=" * 60)

for field, value in extraction.model_dump().items():

print(f" {field}: {repr(value)} → type: {type(value).__name__}")The above code leverages two fundamental libraries:

Pydantic: This is a Python data validation library. You define a schema as a Python class, declare the fields and their types, and Pydantic enforces that any data you put into that class matches what you declared.

Instructor: This is the bridge between Pydantic and the LLM. The core problem it solves is that LLMs’ APIs return text, but Pydantic validates Python objects. So, something has to sit in the middle, take the LLM’s response, parse it into the structure your Pydantic model expects, and retry the call if the output doesn’t validate. That’s what Instructor does. Without Instructor, you would have to manually prompt the model to return JSON, parse that JSON yourself, handle malformed responses, write retry logic, and coerce types by hand.

By using these two libraries, the ArticleExtraction() class does the following:

Type enforcement: Defines cpi_march_value as a float. This guarantees the model will return an actual number) instead of a string (3.5 instead of "3.5 percent" as the previous example

).Controls formatting and vocabulary: The Literal type on market_sentiment restricts the LLM’s output to "positive", "negative", or "neutral". The model cannot invent new categories. Similarly, the description for publication_date explicitly demands the ISO 8601 format.

Built-in prompting: The Field(description="...") parameters serve a dual purpose. First, they document the code for developers. Secondly, under the hood, the Instructor library feeds these exact descriptions to the LLM as targeted instructions. This ensures the model understands exactly what “key claim” or “publication date” means in this context.

Graceful omissions: Wrapping fields like

authorin Optional[...] gives the model permission to safely return a null value if the information isn’t present in the scraped text. This highly reduces the risk of hallucinations.

The JSON output is as follows:

{

"title": "Fed Signals Caution as Inflation Data Disappoints",

"author": "Sarah M. Connelly",

"publication_date": "2026-04-14",

"mentioned_organizations": [

"Federal Reserve",

"Labor Department",

"Reuters",

"Goldman Sachs",

"JPMorgan"

],

"cpi_march_value": 3.5,

"key_claim": "The Federal Reserve remains cautious about cutting interest rates because inflation has not yet shown sufficient progress toward its two-percent target.",

"market_sentiment": "negative"

}As you can see, now the CPI is a float, and the publication date is in ISO 8601.

The inspection output is the following:

============================================================

INSPECTION OUTPUT

============================================================

title: 'Fed Signals Caution as Inflation Data Disappoints' → type: str

author: 'Sarah M. Connelly' → type: str

publication_date: '2026-04-14' → type: str

mentioned_organizations: ['Federal Reserve', 'Labor Department', 'Reuters', 'Goldman Sachs', 'JPMorgan'] → type: list

cpi_march_value: 3.5 → type: float

key_claim: 'The Federal Reserve remains cautious about cutting interest rates because inflation has not yet shown sufficient progress toward its two-percent target.' → type: str

market_sentiment: 'negative' → type: strThis validation helps immediately see that the data types are correct.

Conclusion

In this article, you learned what unstructured text actually costs a data pipeline. You saw how classical NLP made structured extraction possible but fragile, and how LLMs removed the domain constraints that NLP never solved. You also learned why LLMs alone are not enough and saw a practical solution to provide “guardrails” for LLMs so that their output follows a defined schema.

So, let us know: how are you managing unstructured text after you scraped it?