THE LAB #99: HTTP Caching for Web Scraping

How Conditional Requests Can Cut Your Proxy Bill.

One of the biggest cost drivers in recurring scraping operations is fetching pages daily or even more times a day, especially if we need to use proxies, just to discover that have not changed since the last run.

In price monitoring application this is fairly common: let’s say you are monitoring prices every hour across 50,000 product pages, it’s highly probable that most of them still show the same price they showed an hour ago. You are paying your proxy provider for bandwidth that carries identical data, over and over.

The scraping industry is well aware of this problem. A recent analysis by ScrapeOps found that even though proxy prices have dropped by 67% over the past five years, the cost per successful payload has actually increased by 133%, mostly because anti-bot defenses now require heavier infrastructure. When each request costs more, wasting them on unchanged pages hurts even more.

Before proceeding, let me thank NetNut, the platinum partner of the month. They have prepared a juicy offer for you: up to 1 TB of web unblocker for free.

Several approaches try to solve this. Tools like changedetection.io monitor pages for visual or structural changes and alert you when something is different. On the more technical side, Altay Akkus recently explored using SimHash as a client-side fingerprint to determine whether a document has changed since the last crawl, without downloading the full body. These are valid strategies, but they all share one trait: they require you to build and maintain the change detection logic yourself.

What you might not know is that the HTTP protocol already has a native mechanism for this, and it has been part of the spec since 1999. It is called conditional requests, and it lets the server itself tell your scraper “nothing has changed” by responding with a 304 status and zero bytes of body. No diffing, no hashing, no client-side state management beyond storing a single header value.

We have written about proxy cost optimization before in articles like Optimizing Proxy Usage for Large-Scale Scraping and Analyzing the Cost of a Web Scraping Project, but we have never covered this technique. In this article, we will test it against real e-commerce sites and measure exactly how much bandwidth and money it can save.

For your scraping needs, having a reliable proxy provider like Decodo on your side improves the chances of success.

How HTTP caching works (the short version)

When a web server responds to a request, it can include headers that describe the freshness and identity of the content. Two of these headers are relevant for our purposes.

The first is ETag, short for Entity Tag. It is a string that uniquely identifies a specific version of a resource. Think of it as a fingerprint of the page content. When the content changes, the ETag changes.

The second is Last-Modified, a timestamp indicating when the resource was last updated.

These two headers enable what HTTP calls conditional requests. The idea is simple. After your first request, you store the ETag (or the Last-Modified value) returned by the server. On the next request to the same URL, you send it back using the `If-None-Match` header (for ETags) or `If-Modified-Since` (for timestamps). The server compares your stored value with the current one. If they match, the server responds with status 304 Not Modified and an empty body. If they do not match, you get a regular 200 response with the fresh content.

A 304 response contains zero bytes of body. For a proxy billed per GB, that is a request that costs almost nothing in bandwidth.

The tools we used

The HTTP caching technique itself is protocol-level and works with any HTTP client that allows setting custom headers. You could implement it with Python’s `requests`, httpx, or even raw curl.

For this article, we used curl_cffi, a Python HTTP client built on top of curl-impersonate. Its main strength for our purposes is TLS fingerprinting: it can impersonate the TLS handshake of real browsers (Chrome, Firefox, Safari), which prevents e-commerce sites from blocking the request before we even get to test caching behavior. Without TLS fingerprinting, some of the e-commerce targets we wanted to test would have returned 403 immediately, making it impossible to evaluate their caching support.

Then later in the article, we’ll see if we can use the same approach with Scrapy.

The audit methodology

Before attempting conditional requests, we need to check whether a target supports them. We wrote a simple audit function that makes two requests to any URL.

The first request is a standard GET. We capture the ETag, Last-Modified, and Cache-Control headers from the response, along with the response body size.

If an ETag or Last-Modified header is present, we make a second request with the corresponding conditional header (If-None-Match or If-Modified-Since). If the server responds with 304, the site supports conditional requests and we measure the bandwidth saving.

import time

from curl_cffi import requests

def audit_caching(url: str) -> dict:

headers = {

"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

"Accept-Language": "en-US,en;q=0.9",

"Accept-Encoding": "gzip, deflate, br",

}

resp = requests.get(url, headers=headers, impersonate="chrome", timeout=30)

resp_headers = {k.lower(): v for k, v in resp.headers.items()}

etag = resp_headers.get("etag")

last_modified = resp_headers.get("last-modified")

cache_control = resp_headers.get("cache-control")

response_size = len(resp.content)

result = {

"url": url,

"status": resp.status_code,

"etag": etag,

"last_modified": last_modified,

"cache_control": cache_control,

"response_size_bytes": response_size,

"supports_304": False,

}

if etag or last_modified:

time.sleep(2)

cond_headers = dict(headers)

if etag:

cond_headers["If-None-Match"] = etag

if last_modified:

cond_headers["If-Modified-Since"] = last_modified

cond_resp = requests.get(

url, headers=cond_headers, impersonate="chrome", timeout=30

)

result["conditional_status"] = cond_resp.status_code

result["conditional_size_bytes"] = len(cond_resp.content)

result["supports_304"] = cond_resp.status_code == 304

return resultYou can find the code in our GitHub repository reserved to paying users, inside the folder 99.CONDITIONAL_SCRAPING.

Shopify stores: full conditional request support

We focused our testing on Shopify stores because, while working on various scraping projects, we came across several Shopify-hosted sites that had this caching system enabled. Shopify powers hundreds of thousands of online stores and is one of the most common scraping targets in e-commerce, so the finding felt worth investigating systematically. The results were clear: Shopify stores with the native page cache enabled support conditional requests out of the box.

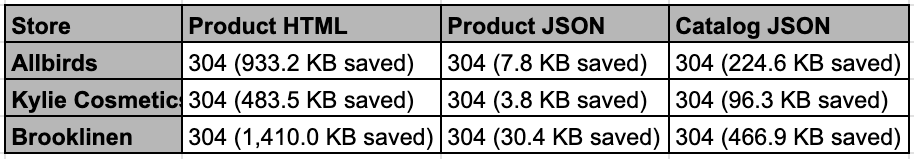

Allbirds, Kylie Cosmetics, and Brooklinen all returned 304 responses consistently. Here is what we measured on Allbirds:

URL: https://www.allbirds.com/products/mens-tree-runners.json

Status: 200

Response size: 7,961 bytes

Caching headers:

ETag: "page_cache:11044168:ProductDetailsController:de822deb7906aa6f9932541f4fe3dae9"

Last-Modified: not present

Cache-Control: not present

Conditional request support:

304 Not Modified: YES

Conditional response size: 0 bytes

Bandwidth saving: 100.0%The saving is 100% because the 304 response body contains exactly zero bytes. The only cost is the request/response headers, which are a few hundred bytes.

This behavior was consistent across three types of Shopify endpoints. The Product HTML page is the standard storefront URL that a browser would load (e.g. /products/mens-tree-runners), which includes the full rendered page with images, reviews, and theme assets. The Product JSON endpoint is the same URL with .json appended (e.g. /products/mens-tree-runners.json), which returns only the structured product data: variants, prices, inventory, and metadata. The Catalog JSON endpoint (/products.json) returns the first page of the store’s entire product catalog in a single response.

We ran repeated conditional requests on each endpoint and confirmed that all returned 304 consistently. The ETag stayed stable as long as the product data did not change.